Your thoughts are your own, right? Perhaps not. New technology is bringing that day closer when the unscrupulous may actually be able to hack human thoughts.

It raises a number of new ethical concerns for this brave new world we’re entering with each rotation of the Earth.

Mind Reading

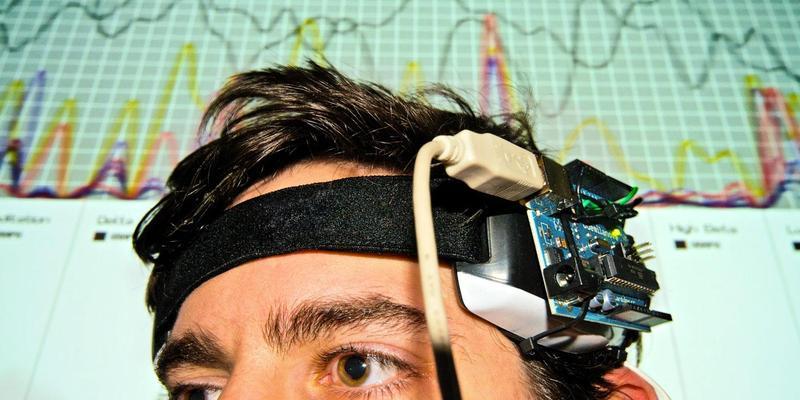

Everyone is familiar with the concept of hacking. It is why we all strive to protect our computers and smartphones from nefarious outside sources trying to break in to steal information, implant malware, etc. Hackers pose a threat to everyone from teenage smartphone users to the computer databases of government organizations. Hacking is a threat that we are all familiar with, and something that many know how to protect against. But, as the line between science and science fiction blurs, even hacking is getting a futuristic upgrade. Recently, at the Enigma Security Conference, University of Washington researcher and lecturer Tamara Bonaci revealed technology that could be used to essentially “hack” into people’s brains.

She created this technology around a game called Flappy Whale. While people played the game, the technology was able to covertly extract neural responses to subliminal imagery in the game like logos, restaurants, cars, etc. Now, hacking into people’s underlying feelings and thoughts about seeing a fast food restaurant doesn’t seem like it could cause much harm, but this technology has the potential to gather much more intimate information about a person like their religion, fears, prejudices, health, etc. This technology could evolve from an interesting way to understand human response to a military device. The possibilities range from an incredibly useful research tool to a potentially frightening interrogation device.

Bonaci’s research focuses on cyber security and privacy, especially in conjunction with biomedical devices. The information that is used in her experiment to determine neural responses is gathered from a person’s electro-physical signals. After the Enigma conference, Bonaci told Ars Technica, “Electrical signals produced by our body might contain sensitive information about us that we might not be willing to share with the world. On top of that, we may be giving that information away without even being aware of it.”

The Future

While this technology isn’t yet capable of complete mind reading, Bonaci is sure that if combined with virtual reality (VR) headset technology, fitness apps that use physical devices, modified BCI equipment, or other combinations of software and hardware, this technology could ultimately allow researchers to retrieve a much wider variety of sensitive elecric signals from humans.

As biomedical technology and methods for bringing us closer to our electronics continue to develop and improve, Bonaci’s experiment will become increasingly more relevant. And while there is no current need to panic about having our minds read without our consent or knowledge, there is a very real future possibility of ethical concerns surrounding this technology. We will one day have to think of the electrical signals that we produce biologically as data that could be stolen, manipulated, or used against us.

via Futurismo

Be the first to post a message!